Previously I published a guide for setting up tensorflow in an anconda environment with GPU support. A lot of people liked it and I have been working with this environment myself for more than a year now. I am happy with the results however the process is a bit involved and requires quite a bit of configuration on the OS level as well as setting up the CUDA and cuDNN libraries on your windows machine. The problem with this kind of setup is that – your GPU drivers and the installed CUDA and cuDNN library versions affect the tensorflow environments and that are available to you. On windows there are frequent graphics driver updates from Nvidia which can break your environments. While running all of this in a conda environment gives you some relief in terms of isolating the dependencies but since the GPU drivers as well as CUDA and cuDNN libraries have to be installed on your host machine – the versions of these can go out of sync due to automatic windows updates. Also there are very strict requirements from tensorflow in terms of supported CUDA versions. So what can we do?

Docker Comes to Rescue

Docker is a great tool that helps us create virtualized environments which are isolated on the kernel level which means that you are not sharing the software configuration with the host machine. This article assumes that you have some familiarity with using docker, if you are new to docker, I will recommend you to quickly go through this getting started guide. Recently I came across this simple way of being able to pass through the GPUs inside a docker container. This means that your operating system will expose your native GPU as a shared device to the docker host such that the container will have access to the GPU and you don’t need to install CUDA or CUDNN libraries on your host machine.

In this article I will guide you through the step by step process of using one of the docker images that I have created which contains most of the popular machine learning libraries along with Jupyter notebooks to get upto speed instantly.

My Environment

One of the advantages of using docker is that your host machine environment doesn’t matter a lot in terms of what is available inside the containers but I am still listing it to give an idea around what machine I am using to provide this guide.

My environment is as follows.

- Operating System: Windows 11 Pro (everything in artical would work on Windows 11 editions)

- Processor: AMD Ryzen Threadripper 2970WX (CPU doesn’t matter)

- Motherboard: Asus Rog X399 (Any motherboard is fine)

- Graphics Card: NVIDIA GeForce RTX 2070 8GB GDRR6 (any RTX or Quadro GPU is fine)

Dependencies

To follow along this guide you will need to install the following on your host machine.

- GPU driver for your Nvidia Graphics Card, you can follow the instructions listed in Step 1: NVIDIA Graphics Driver Installation section in How To: Setup Tensorflow With GPU Support in Windows 11 article that I published earlier.

- Docker desktop: To install docker desktop on your OS – please follow the guide available at Get Docker documentation.

No additional software is needed after installing these 2 dependencies, this is the beauty of using a virtualization solution like containers. Did I just say that I ❤️Docker.

Configuring a Python Environment

Now we are ready to create a new environment and start building deep-learning models using tensorflow with full GPU support.

Step 1: Create a folder for code and docker-compose

We will use docker-compose to quickly spin up and destroy ephemeral environments for our machine learning projects. Please go ahead and create a new folder/directory on your machine and in the root of the folder create a new file called docker-compose.yml. This file will define the environment that we will be working with. Go ahead and paste the following code in the newly created docker-compose.yml file.

version: '3.0'

services:

tensorflow:

container_name: tensorflow-gpu

image: thegeeksdiary/tensorflow-jupyter-gpu:latest

restart: unless-stopped

volumes:

- ./notebooks:/environment/notebooks

- ./data:/environment/data

deploy:

resources:

reservations:

devices:

- driver: nvidia

device_ids: ['0']

capabilities: [gpu]

ports:

- '8888:8888'

networks:

- jupyter

networks:

jupyter:

driver: bridge

Step 2: Create Directories For Notebooks & Data

Now let’s go ahead and create 2 directories in the root of the code folder/directory that we created in the previous step. Please name them notebooks and data (check the code at line# 8 & line# 9 in previous section in the docker-compose.yml file). This would allow you to the persist the code and also provide data (like csv/json files) that you would write in the Jupyter notebooks running inside the docker container that we will spin up in the next step. Basically this is just a way to pass data files from your host machine to the Jupyter notebooks running inside the container and save the notebooks that you will create to your host machine so that you can for example check them into your version control system like git.

Step 3: Open The Jupyter Notebook & Start Modeling

In the root of your repo/code folder type type the below command:

docker-compose up

This can take a while depending on your machine and network bandwidth as the image is approximately 9 GB in size, you might need to increase the allocated storage for the docker desktop on your machine – I am using the WSL backend for docker which means that that I am not affected by the image size but if you are still using Hyper-V as the virtualization backend then this article might help you. Once the container is running you should see something like this in your terminal.

/usr/local/lib/python3.8/dist-packages/traitlets/traitlets.py:2544: FutureWarning: Supporting extra quotes around strings is deprecated in traitlets 5.0. You can use '' instead of "''" if you require traitlets >=5. warn(

[I 16:50:53.312 NotebookApp] Writing notebook server cookie secret to /root/.local/share/jupyter/runtime/notebook_cookie_secret

[I 16:50:53.313 NotebookApp] Authentication of /metrics is OFF, since other authentication is disabled.

[W 16:50:53.718 NotebookApp] All authentication is disabled. Anyone who can connect to this server will be able to run code.

[I 16:50:54.492 NotebookApp] jupyter_tensorboard extension loaded.

[I 16:50:54.537 NotebookApp] JupyterLab extension loaded from /usr/local/lib/python3.8/dist-packages/jupyterlab

[I 16:50:54.537 NotebookApp] JupyterLab application directory is /usr/local/share/jupyter/lab

[I 16:50:54.539 NotebookApp] [Jupytext Server Extension] NotebookApp.contents_manager_class is (a subclass of) jupytext.TextFileContentsManager already - OK

[I 16:50:54.540 NotebookApp] Serving notebooks from local directory: /environment

[I 16:50:54.540 NotebookApp] Jupyter Notebook 6.4.10 is running at:

[I 16:50:54.540 NotebookApp] http://hostname:8888/

[I 16:50:54.540 NotebookApp] Use Control-C to stop this server and shut down all kernels (twice to skip confirmation).

Once the container is running – you can open a browser on your machine and navigate to http://localhost:8888/tree URL. You should now be presented with a UI similar to the below image.

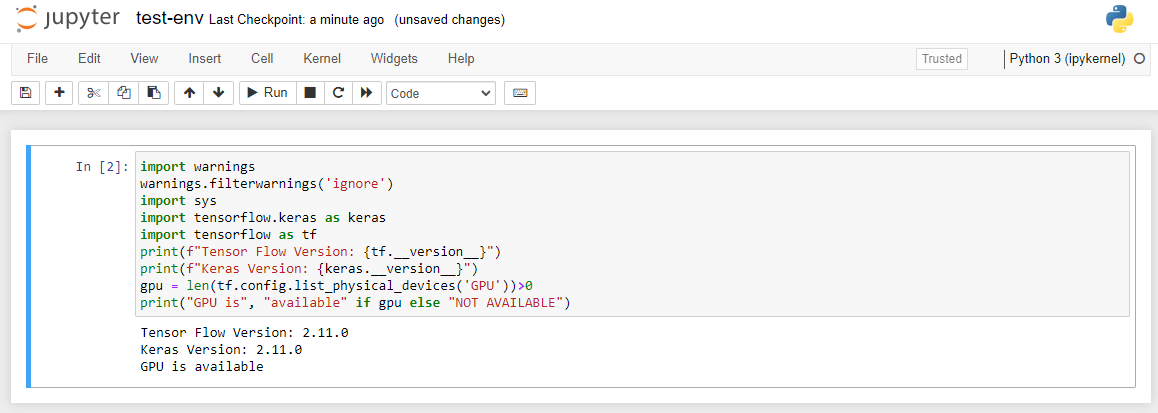

You can now open the included notebook test-env.ipynb and run the first and only cell to verify that tensorflow and the GPU is indeed available in your new environment. You should see output as shown below upon running the first cell.

Conclusion

I am maintaining the docker images provided above and I use them for my personal machine learning development workflows as well, I hope you found this article helpful, if you run into any issues or have ideas to improve certain aspects – please drop a comment below – I am sure it will help. Also please check out my other articles to see other useful tutorials.

I have published a series on deep learning using tensorflow, checkout that article here.

https://thegeeksdiary.com/2023/03/23/introduction-to-deep-learning-with-tensorflow-deep-learning-1/

Leave a Reply